Last updated: April 2026

If you’ve spent any time researching AI certifications, you’ve run into all three terms used interchangeably — sometimes by people who should know better. Before you commit time and money to a certification path, it’s worth being clear on what each actually means and how they relate.

This isn’t a deep technical explainer. It’s a practical map of the territory.

Artificial Intelligence: The Broadest Category

Artificial intelligence is the umbrella term. It refers to any computer system designed to perform tasks we’d normally associate with human intelligence: recognizing images, understanding spoken language, making decisions, translating text, or playing chess.

The key word is any. AI is a goal, not a specific technology. The methods used to achieve that goal have changed dramatically over the decades, and the definition has shifted along with them. Systems that seemed like impressive AI in the 1990s, like rule-based expert systems that diagnosed medical conditions, look nothing like what we call AI today.

When someone in 2026 says “AI,” they usually mean one of the specific approaches below. The term has become so broad it’s almost more useful as a marketing category than a technical one.

Machine Learning: Teaching by Example

Machine learning is the approach that took over AI for the past decade or so. Instead of writing explicit rules (“if the email contains these words, mark it as spam”), you feed a system thousands of examples and let it figure out the patterns itself.

The core idea: given enough data, algorithms can learn to make predictions or decisions without being explicitly programmed for every scenario.

A few practical examples of ML in the real world:

- Netflix recommending shows based on your watch history

- Your bank flagging an unusual transaction

- A hospital system predicting which patients are at risk of readmission

- Spam filters that get better over time

ML requires a lot of labeled data, computing power to train the model, and ongoing work to maintain accuracy as the world changes. It’s not magic. It’s pattern matching at scale.

The main types of ML you’ll encounter:

Supervised learning — the model learns from labeled examples. You show it thousands of photos labeled “cat” or “not cat” until it can sort new photos on its own.

Unsupervised learning — the model finds patterns in unlabeled data. Useful for clustering customers into groups or detecting anomalies.

Reinforcement learning — the model learns by trial and error, getting rewarded for good decisions. AlphaGo Zero, DeepMind’s Go-playing AI, mastered the board game Go by playing millions of games against itself with no human game data at all.

Deep Learning: A Subset of ML Worth Knowing

You’ll also hear “deep learning” thrown around. It’s a specific type of machine learning that uses neural networks, loosely inspired by how the brain works, stacked into many processing layers.

Deep learning is what made the recent wave of AI practical. It’s behind image recognition, voice assistants, language translation, and most of what we now recognize as modern AI. It needs a lot of computing resources but achieves accuracy levels that earlier ML approaches couldn’t get close to.

You don’t need to understand the math to work with AI systems. Knowing that deep learning sits underneath most current AI products is useful context.

Generative AI: Creating, Not Just Classifying

Traditional ML is mostly about classification and prediction. Is this email spam? Will this customer churn? What’s the likely diagnosis?

Generative AI does something different. It creates new content: text, images, audio, video, code. It generates outputs that didn’t exist before rather than sorting or predicting from existing categories.

The technology behind modern generative AI is the large language model, or LLM. These are trained on enormous amounts of text and learn to predict what comes next in a sequence. That objective, applied at massive scale, produces systems that can write convincingly, answer questions, translate languages, summarize documents, and write functional code.

ChatGPT, Claude, Gemini, and Copilot are all generative AI products built on LLMs. Amazon Bedrock, Azure OpenAI Service, and Google Vertex AI are the cloud platforms enterprises use to build their own generative AI applications on top of those models.

What makes generative AI different from earlier AI:

- It works well with minimal examples. You don’t need thousands of labeled training samples.

- It can handle tasks it wasn’t explicitly trained for.

- It generates open-ended outputs rather than choosing from predefined categories.

- It can follow plain English instructions rather than requiring structured inputs.

That last point is why generative AI spread so quickly. Earlier ML systems required data scientists to configure them. Generative AI tools can be picked up by almost anyone.

How They Fit Together

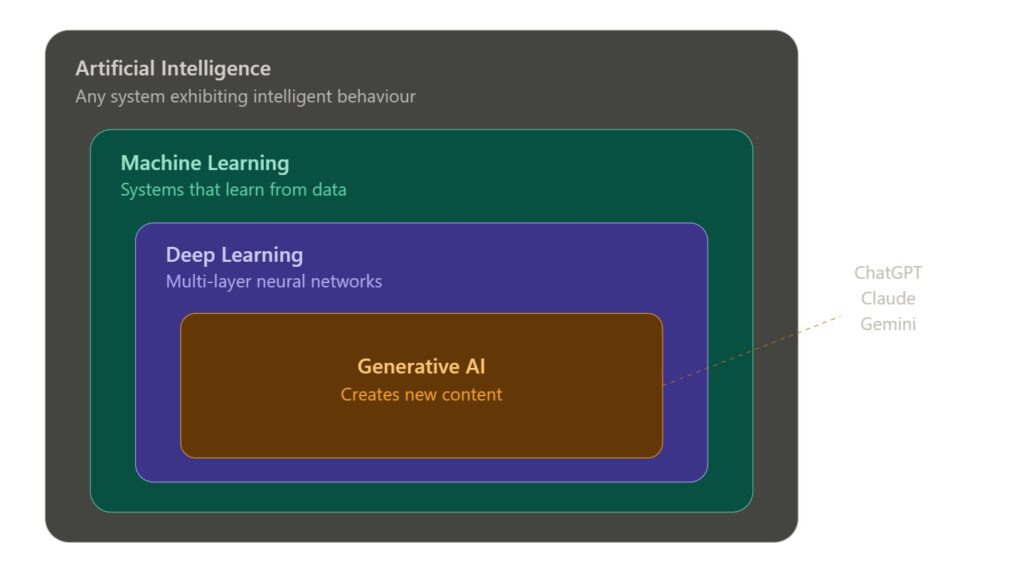

Think of it as nested categories.

Artificial Intelligence is the broadest term. Any system exhibiting intelligent behavior.

Machine Learning is the main approach used to build AI today. Systems that learn from data rather than following hardcoded rules. Most practical AI systems are ML systems.

Deep Learning is a particularly powerful type of ML using multi-layer neural networks. It powers most of what we recognize as modern AI.

Generative AI is a category of deep learning models specifically trained to create new content. It’s the most recent wave and currently the most commercially active area.

Everything in generative AI is machine learning. Everything in machine learning is AI. But not all AI is ML, and not all ML is generative AI.

Why This Matters for Certifications

The certification split reflects this directly.

Traditional ML certifications like Google Professional Machine Learning Engineer and Azure Data Scientist Associate (DP-100) were built around the older paradigm: data pipelines, model training, feature engineering, deployment infrastructure. They assume you’re building and managing models. Note that Microsoft is retiring DP-100 on June 30, 2026, along with several other existing Azure AI credentials, as part of a broader overhaul of its certification portfolio. Check Microsoft’s current certification pages before pursuing any Azure credential.

AI practitioner certifications like AWS AI Practitioner and Azure AI Fundamentals are designed for people who work with AI systems rather than building them from scratch. They cover concepts, use cases, and responsible AI practices.

Generative AI certifications are the newest category. AWS Certified Generative AI Developer – Professional (AIP-C01) and Microsoft’s new Azure AI App and Agent Developer Associate (AI-103, launching June 2026) focus on building applications using foundation models and APIs rather than training models from scratch. [Which AI Certification Should You Get First?] — INTERNAL LINK PLACEHOLDER

Knowing which category fits your work helps you pick the right cert. A project manager overseeing AI initiatives doesn’t need an ML engineering credential. A developer building chatbots on Azure doesn’t need deep expertise in model training. The right cert depends on what you actually do, or want to do. [The AI Certifications That Actually Matter in 2026] — INTERNAL LINK PLACEHOLDER

The Practical Takeaway

The terminology matters less than the job you’re targeting:

- Working with pre-built AI services and APIs → AI practitioner certs

- Building and deploying ML models → ML engineering certs

- Building generative AI applications → GenAI developer certs

- Managing AI projects and strategy → PMI-CPMAI or similar

The field moves fast and the labels shift. But the underlying split between “using AI” and “building AI” is a reliable guide to where to start.

Certification details verified as of April 2026. Costs and requirements can change; check official vendor pages before registering.

Disclosure: CertTrek earns a commission when you purchase through links on this site, at no extra cost to you. This doesn’t influence our recommendations.